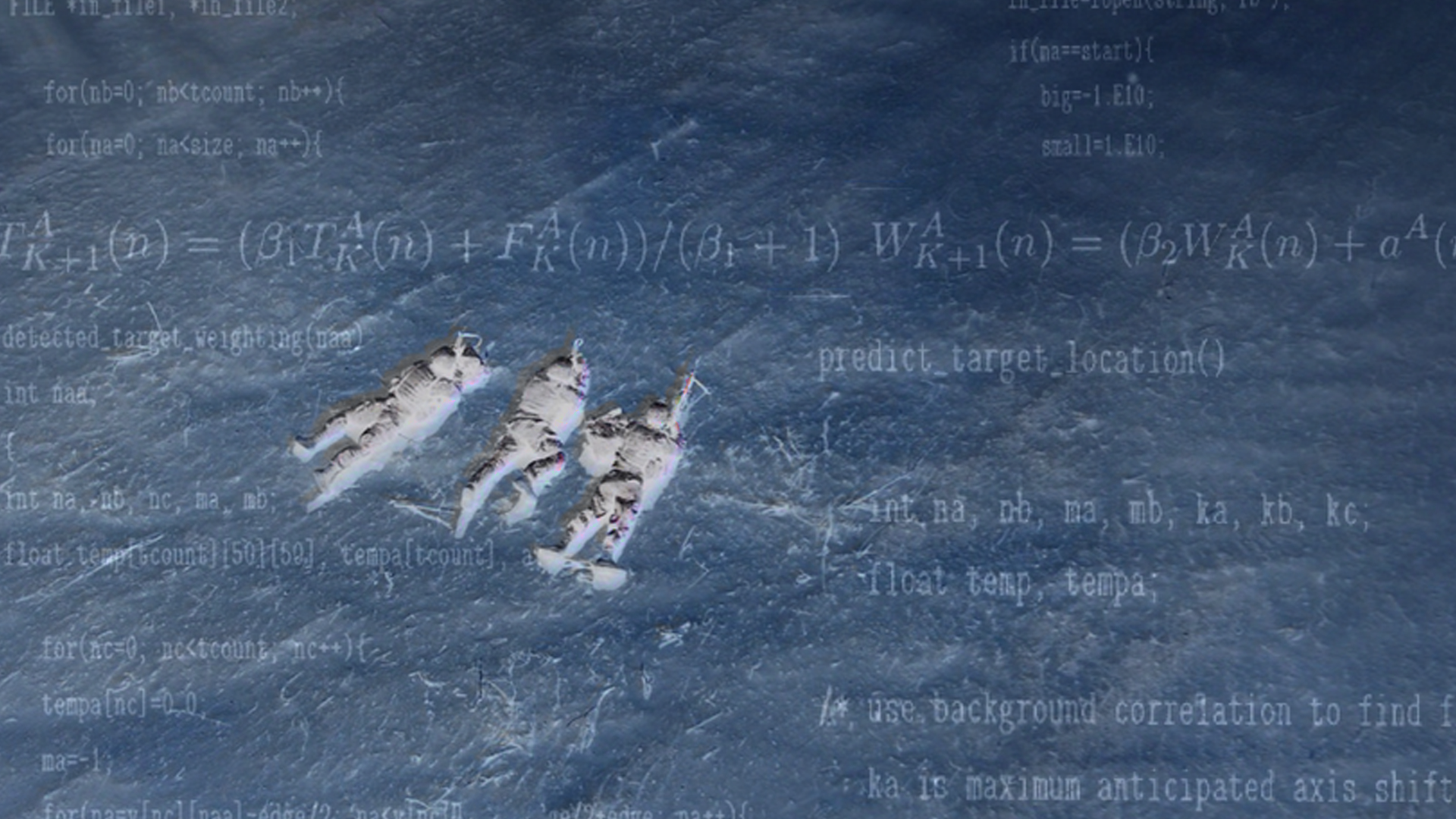

AI-enabled geospatial intelligence is reshaping military competition, but the revolution is more precise, and more dangerous, than its most dramatic advocates suggest.

In February 2026, as the United States accelerated its military build-up around Iran, a Chinese geospatial intelligence company called MizarVision began circulating satellite imagery that was striking not for its resolution but for its organisation. High-definition photographs of American bases in Jordan, Qatar, and Saudi Arabia were annotated, labelled, and formatted into something closer to an operational briefing than a news photograph. Carrier positions, runway activity at al-Udeid and Ovda, logistics signatures at forward operating sites: all machine-sorted and publicly distributed in near real time.

The episode attracted commentary framing it as proof that a new era of warfare had arrived, one in which the first shot would be fired not from a runway or missile silo but by an algorithm that had already identified every target from orbit. That framing captures something real. But it also obscures the more precise and more actionable strategic truth.

The Shift That Actually Happened

Satellite imagery is not new. What is new is that machine vision can now process it at a scale and speed no human organisation can match. For decades, the constraint on imagery intelligence was not collection but interpretation. Trained analysts, compartmented bureaucracies, and slow dissemination pipelines throttled the operational value of what satellites could see. That bottleneck has moved. Computer vision systems can now detect, classify, and disseminate findings across a commercial imagery feed faster than any national intelligence centre.

The significance of this shift is not merely technical. It is structural. What once required a state-level investment in imagery exploitation infrastructure can increasingly be approximated by software stacked on commercial cloud architecture and trained on open-source data. The private sector is no longer supplying pictures. It is supplying theatre awareness, and it is supplying it to anyone with the access and the model.

This produces a political asymmetry that Western governments have been slow to absorb. During the Ukraine war, commercial satellite imagery was widely celebrated as a democratising force: a check on Russian operational deception, a tool for accountability, a structural advantage for the transparency-friendly side of the conflict. The implicit assumption was that open-source overhead surveillance naturally served liberal democratic interests. MizarVision and the Chinese geospatial intelligence infrastructure behind it dispose of that assumption cleanly. Structural capabilities do not carry ideological loyalty. The West helped normalise the instrument. It cannot now monopolise its use.

Where the Argument Runs Ahead of the Evidence

The leap from “AI can classify a carrier strike group” to “an algorithm fires the first shot” is too large, and the gap between them matters strategically. Commercial imagery at fifty-centimetre resolution can confirm the presence of an F-35 on a ramp. It cannot reliably determine its fuel state, weapons load, maintenance window, or sortie schedule. The difference between knowing an asset exists and knowing precisely when it is vulnerable is the difference between surveillance and targeting. Conflating them overstates the current operational readiness of these systems and, more importantly, misidentifies where the real danger lies.

Authorisation also still lies with humans. In every major military doctrine, American, Chinese, and Russian alike, kinetic action requires a political decision. An algorithm that has identified every target from orbit still requires a commander, and ultimately a head of state, to act on that picture. The compression of the sensing-to-understanding timeline does not automatically compress the understanding-to-decision timeline. Institutional friction, escalation calculus, and signalling dynamics all intervene. Treating the kill chain as a purely technical problem mistakes software capability for strategic agency.

Countermeasure adaptation is also systematic, not absent. Emissions control, signature management, deliberate deception, dispersal, and spoofing are active and evolving military disciplines, and they are evolving in direct response to persistent overhead surveillance. The question is not whether these countermeasures exist but whether they remain sufficient at scale. That is a genuine empirical question, and one that defence establishments are actively working through. Presenting militaries as passive recipients of surveillance pressure misreads both their doctrine and their investment priorities.

The Risk That Is Actually There

The genuine strategic danger is narrower than autonomous AI warfare, and in some ways more insidious for it. AI-enabled geospatial intelligence shortens the window between a commander’s decision and the availability of an actionable targeting picture. It reduces the cost of continuous monitoring for state and non-state actors alike. And it creates new pathways for sensitive operational intelligence to enter public or adversarial channels through commercial intermediaries who are not bound by the classification architectures that have traditionally governed such material.

These are serious problems. They demand serious investment in signature management, commercial imagery governance, and a harder-edged understanding of escalation dynamics in a world where both sides can observe each other’s preparations in near real time. But they do not support the conclusion that interpretive speed alone determines who prevails in future conflict, or that the pre-kinetic phase has already superseded the kinetic one.

The algorithm does not fire the first shot. It narrows the decision space of the human who does. In a world moving as fast as this one, that is consequential enough to deserve serious attention on its own terms, without inflation.